If pedophiles won’t be able to tell what’s real and what’s AI generated why risk jail to create the real ones?

If pedophiles won’t be able to tell what’s real and what’s AI generated why risk jail to create the real ones?

Give money to open source projects, invest in distributed infrastructure, kick out big tech.

Cancel them anyway.

So you think to train AI you just show it random images without describing what they represent and AI just magically learns? If I then ask AI to create an image of a computer, how does it know what a computer is? Does it just learn this on it’s own from all the random images?

But you have to describe it. It doesn’t just suck in images at random. I imagine someone will remove CP when the images are reviewed. Or do you think they just download all images and add them to the training set without even looking at them?

What AI are you talking about? Are you suggesting the commercial models from OpenAI are trained using CP? Or just that there are some models out there that were trained using CP? Because yeah, anyone can create a model at home and train it with whatever. But suggesting that OpenAI has a DB of tagged CP is a different story.

You know what that means? Time for world leaders to write some very harsh ‘we don’t know anything until format investigation is finalized’ bullshit letters.

Only one thing to do: set two pedophile hunters up on a date.

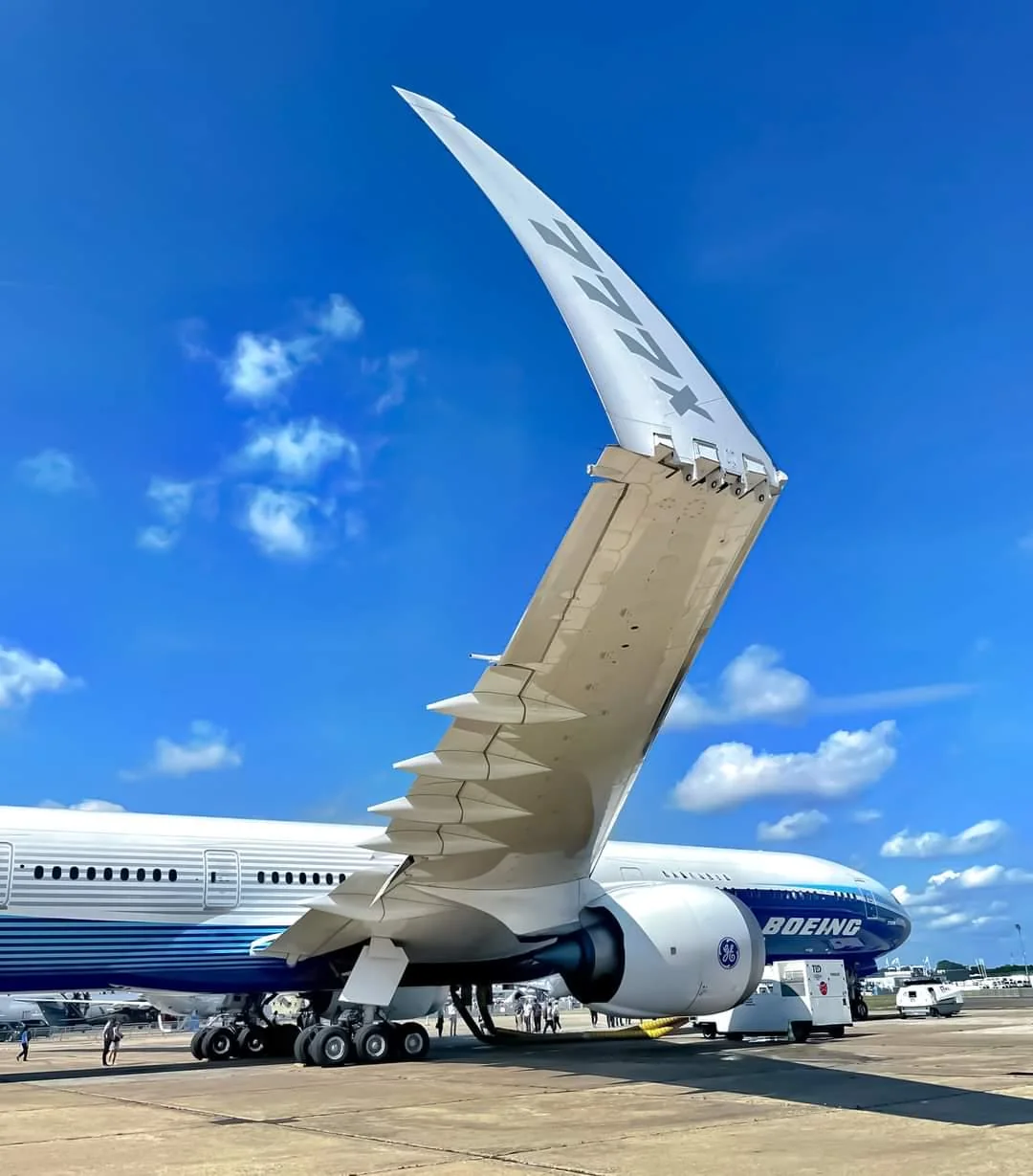

If you listen to the actual talk the bird they are talking about is an albatross and they are simply saying that to improve efficiency you need to make the wings longer and slimmer but then the plane will not fit in current aiport gates so they are working on folding wings.

Selective as in going after the biggest, most important offenders first? I sure hope they do.